GRADIENT BOOSTED TREES

WHAT IS Gradient Boosted Trees

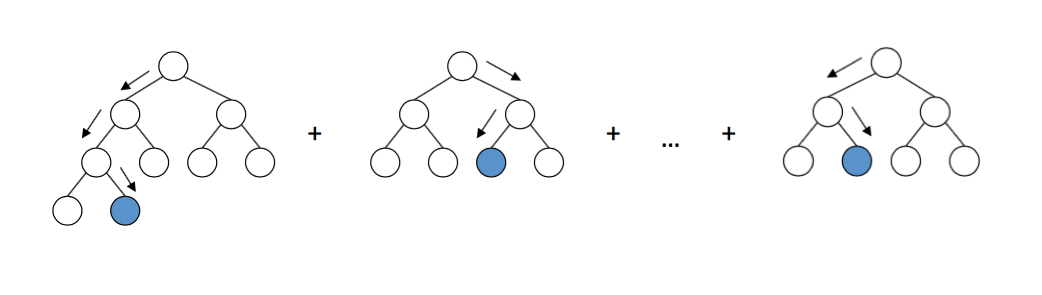

Gradient Boosted Trees (GBT) is a powerful supervised machine learning algorithm primarily used for tasks involving regression and classification. It builds an ensemble model by iteratively training a sequence of decision trees, where each tree attempts to correct the errors of the previous trees. The process starts with an initial model, often a simple one like predicting the mean of the target variable, and then adds trees sequentially.

Concept of gradient boosted trees

Implementation of gradient boosted trees in python

Importing libraries

Loading dataset

Define dependent and independent variable

Splitting dataset into training and testing

Applying the model

Get prediction result

cross_val_score(gbr, X_train, Y_train, cv=3, n_jobs=-1).mean()

0.9221335697399526

Parameters that you can tune in gradient boosted trees

Learning Rate (eta):

- Controls the contribution of each tree; a lower rate results in slower learning but may enhance generalization.

Number of Estimators (n_estimators):

- Total number of trees to be added to the model; more trees can improve performance but may risk overfitting.

Maximum Depth (max_depth):

- Maximum depth of each tree; deeper trees can capture complex relationships but may lead to overfitting.

Minimum Samples Split (min_samples_split):

- Minimum number of samples required to split an internal node; helps prevent overfitting by ensuring nodes contain sufficient samples.

Minimum Samples Leaf (min_samples_leaf):

- Minimum number of samples required to be at a leaf node; increasing this number can smooth the model and reduce overfitting.

Subsample:

- Fraction of samples used for fitting each tree; introduces randomness and helps prevent overfitting.

Colsample_bytree:

- Fraction of features (columns) to be sampled for each tree; adds randomness to reduce overfitting.

Gamma (min_split_loss):

- Minimum loss reduction required to make a further partition on a leaf node; a larger value leads to a more conservative model.

Regularization Parameters (alpha and lambda):

- Alpha (L1 regularization): Adds a penalty equal to the absolute value of coefficients; helps reduce overfitting.

- Lambda (L2 regularization): Adds a penalty equal to the square of the coefficients; helps control model complexity.

Scale Pos Weight:

- Used in unbalanced datasets to balance the weights of positive and negative classes, focusing more on the minority class.

Boosting Type:

- Defines the type of boosting model to use (e.g., 'gbtree', 'gblinear', 'dart'); affects how trees are constructed.

Early Stopping:

- Technique to stop training when performance on a validation set no longer improves; helps prevent overfitting.

Tree Method:

- Specifies the tree construction algorithm to use (e.g., 'exact', 'approx', 'hist'); affects performance and scalability.

Learning Rate (eta):

- Controls the contribution of each tree; a lower rate results in slower learning but may enhance generalization.

Number of Estimators (n_estimators):

- Total number of trees to be added to the model; more trees can improve performance but may risk overfitting.

Maximum Depth (max_depth):

- Maximum depth of each tree; deeper trees can capture complex relationships but may lead to overfitting.

Minimum Samples Split (min_samples_split):

- Minimum number of samples required to split an internal node; helps prevent overfitting by ensuring nodes contain sufficient samples.

Minimum Samples Leaf (min_samples_leaf):

- Minimum number of samples required to be at a leaf node; increasing this number can smooth the model and reduce overfitting.

Subsample:

- Fraction of samples used for fitting each tree; introduces randomness and helps prevent overfitting.

Colsample_bytree:

- Fraction of features (columns) to be sampled for each tree; adds randomness to reduce overfitting.

Gamma (min_split_loss):

- Minimum loss reduction required to make a further partition on a leaf node; a larger value leads to a more conservative model.

Regularization Parameters (alpha and lambda):

- Alpha (L1 regularization): Adds a penalty equal to the absolute value of coefficients; helps reduce overfitting.

- Lambda (L2 regularization): Adds a penalty equal to the square of the coefficients; helps control model complexity.

Scale Pos Weight:

- Used in unbalanced datasets to balance the weights of positive and negative classes, focusing more on the minority class.

Boosting Type:

- Defines the type of boosting model to use (e.g., 'gbtree', 'gblinear', 'dart'); affects how trees are constructed.

Early Stopping:

- Technique to stop training when performance on a validation set no longer improves; helps prevent overfitting.

Tree Method:

- Specifies the tree construction algorithm to use (e.g., 'exact', 'approx', 'hist'); affects performance and scalability.

Advantages and disadvantages of gradient boosted trees

Advantages

- High Accuracy: Gradient boosting often provides superior predictive performance, as it reduces errors through iterative learning from previous models' mistakes.

- Handles Complex Data Well: It can capture complex, non-linear patterns in the data, making it suitable for diverse applications like finance and healthcare.

- Feature Importance: It naturally provides feature importance scores, helping to identify the most influential features for the predictions.

Disadvantages

- Computationally Intensive: Training gradient boosted trees is time-consuming and requires substantial computational resources, especially with large datasets.

- Sensitive to Overfitting: Gradient boosting can overfit, particularly with noisy data, so it often requires careful parameter tuning.

- Hard to Interpret: The model complexity makes gradient boosted trees less interpretable compared to simpler models, limiting transparency in decision-making.

Comments

Post a Comment