SUPPORT VECTOR MACHINE(SVM)

| Figure 1: Support Vector Machine |

What is SVM

Concept of SVM

Scenario

| Figure 2: Example of dosage data |

| Figure 3: Example of dosage data |

|

| Figure 4: Example of data |

1) Margins

- Maximal margin classifiers

- Soft margin classifiers

Maximal Margin Classifiers

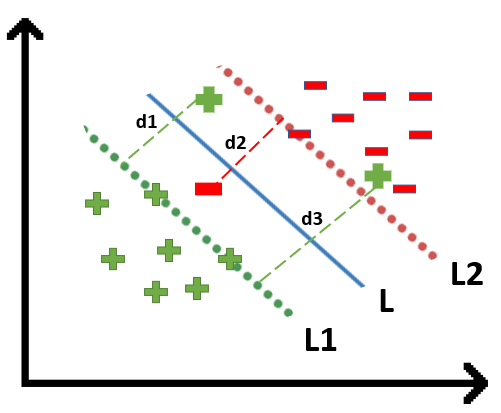

|

| Figure 5: Example of data |

Soft margin classifiers

| Figure 6: Example of data |

| Figure 7: Example of data |

2) Hyperplane

| Figure 8: Example of mass data |

|

| Figure 9: Example of data in 2D |

|

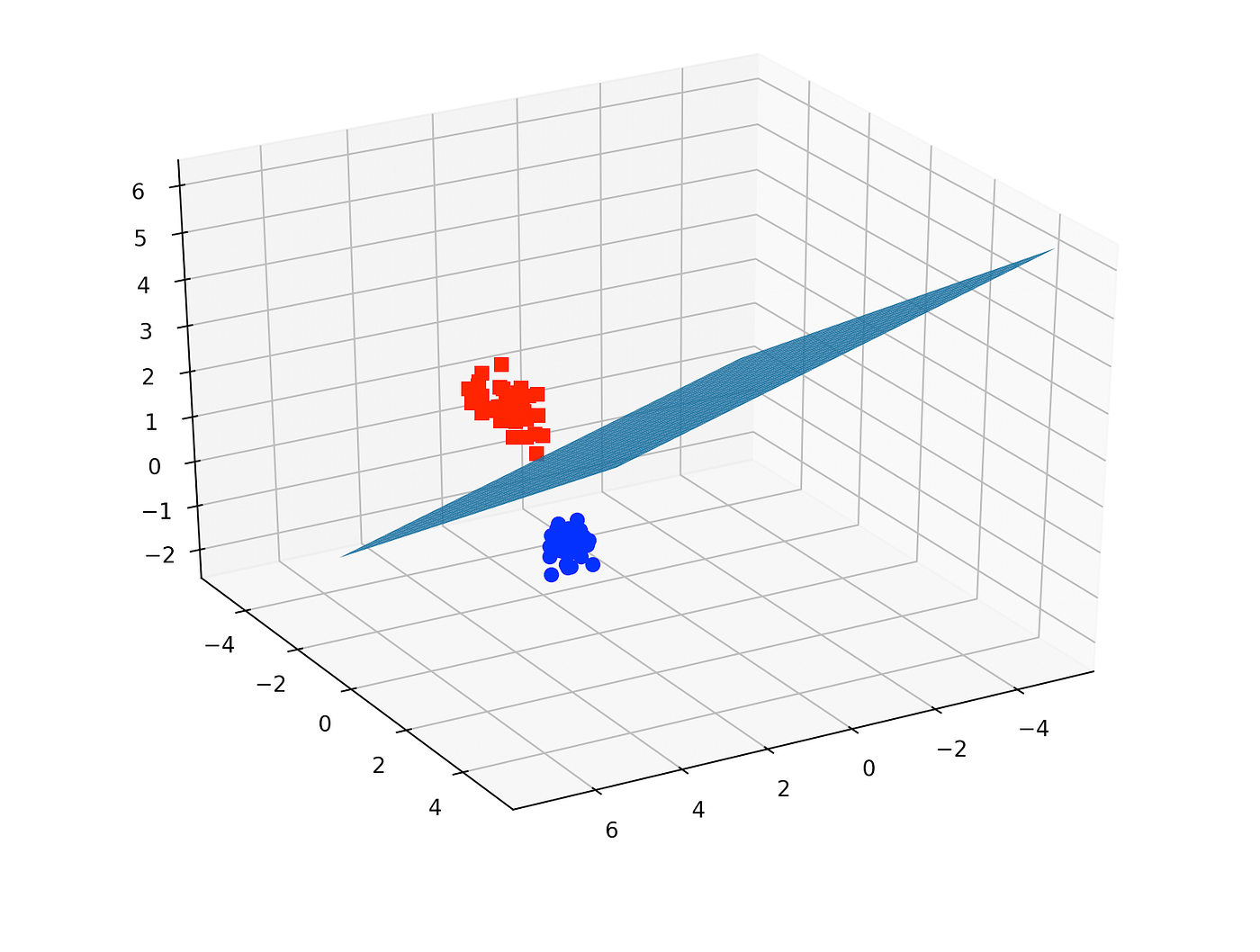

| Figure 10: Example of data in 3D |

3) Support vectors

|

| Figure 11: Example of mass data |

|

| Figure 12: Example of data |

4) Kernals

Polynomial Kernel

- The high-dimensional coordinates for the data

- High dimensional relationships

First term = x-axis coordinatesSecond term = y-axis coordinatesThird term = z-axis coordinates (if same can ignore)

The high-dimensional relationships can be obtain through plugging the values into the kernal.

Radial Basis Function (RBF) Kernel

| Figure 13: Example of data |

|

| Figure 14: Formula of RBF Kernel |

Parameters that you can tune for SVM

Kernel cache

Specifies how much memory is allocated for the cache of the Kernel matrix. It can significantly improve computational efficiency to set this large enough to hold the Kernel matrix in memory. However, it increases the memory footprint.

C

It is a regularization parameter that states the trade-off between achieving a low error on the train sample and minimizing the norm of the weights. The value of C is small, leading to a large margin with some misclassifications, and it is large, leading to a small margin with few misclassifications.

Epsilon for convergence

The tolerance for the optimization algorithm, thus specifying its stopping criterion. If the change of the objective value is smaller than the set Epsilon value, the algorithm would stop running. Smaller precision values of Epsilon bring a higher precision but at a higher number of iterations.

Maximum iterations

This is the prespecified maximum number of iterations that the algorithm goes through. If the iterations that the algorithm goes through are more than the maximum iterations, then the algorithm is terminated, regardless of the fact that the required convergence standards are achieved or not.

Scale

There has to be a scaling of the data before the SVM algorithm can be executed.

L pos

It is the loss weight for samples in the positive class. This allows one to set cases of penalizing the mistake of an incorrect classification of a positive sample with a different penalty since it allows settings in a class that can counter problems related to imbalanced data.

Lneg

The loss weight of the positive class samples, and this gives the opportunity for the model to provide a different penalty for the misclassification of the negative samples.

Epsilon

Indicates within which epsilon tube no penalty is associated with the loss function during training. This defines a margin of tolerance where no penalty is given to errors.

Epsilon plus

A positive deviation from the actual values within which the prediction is considered acceptable.

Epsilon minus

Deviation from the actual values in which prediction is considered to be acceptable.

Cost balance

Balances the cost of misclassification across different classes. It becomes very useful for working with imbalanced datasets where one class is much more frequent than the other.

SVM Quadratic loss pos

Misclassification of the positive instances is quadratically penalized by the distance from the separating hyperplane.

Quadratic loss neg

The penalty of negative misclassification increases quadratically, relative to the distance from the decision boundary.

Kernal types

- Dot: This is the simplest kernel that provides a representation of the dot product of two vectors. It linearly separable data so decision boundary is a straight line or hyper plane in higher dimensions.

- Radial Basis Function Kernel (RBF Kernel): Maps data into an infinite dimensional space. It works in cases when the relation between class labels and attributes is nonlinear.

- Polynomial Kernel: The kernel representing the similarity in a polynomial feature space and it separates data non-linearly.

- Neural or Sigmoid Kernel: This kernel is applied in problems where the relation of classes from each other is complicated.

- ANOVA (Analysis of Variance) Kernel: This kernel calculates the variance among various groups or categories so problems related to regression can be solved effectively.

- Epanechnikov Kernel: Mainly used for density estimation purposes and it is less common in support vector machines, while in kernel density estimation it can be used to smooth data in a non-parametric way.

- Gaussian Combination Kernel: The kernel combines a few Gaussians that generalizes the flexibility of having multiple Gaussians with different parameters to be used and fit the structure of the data better.

- Multiquadric Kernel: It belongs to the radial basis function kernels and it can be used in interpolation problems and in particular in geo-statistics.

Implementation of SVM in python

Import the dataset

Getting a preview of the dataset

Checking dataset completeness

Data transformation using OrdinalEncoder

Understanding the correlation between features

Splitting the data into training and testing

Splitting the data and implement decision tree model

Test model performance

Advantages and disadvantages of SVM

Advantages

Effective in High-Dimensional Spaces:

- SVM is able to perform excellently in scenarios where the amount of dimensions exceeds the number of samples as they are particularly effective in high-dimensional spaces, making them suitable for text classification and other tasks with a large number of features.

Memory Efficiency:

- SVM uses only a subset of the training points in the decision function known as the support vectors of the model, hence becoming memory efficient. This comes extremely useful while dealing with large data-sets.

Versatility:

- SVM can be used whether it would be the task of linear or non-linear classification because complex relationship between features is easily handled by kernel functions such as polynomial, RBF, or sigmoid.

Effective in High-Dimensional Spaces:

- SVM is able to perform excellently in scenarios where the amount of dimensions exceeds the number of samples as they are particularly effective in high-dimensional spaces, making them suitable for text classification and other tasks with a large number of features.

Memory Efficiency:

- SVM uses only a subset of the training points in the decision function known as the support vectors of the model, hence becoming memory efficient. This comes extremely useful while dealing with large data-sets.

Versatility:

- SVM can be used whether it would be the task of linear or non-linear classification because complex relationship between features is easily handled by kernel functions such as polynomial, RBF, or sigmoid.

Disadvantages

Computational Complexity:

- Training SVMs can become quite computationally intensive, especially for large datasets. In most cases, training complexity is either quadratic or cubic in the number of samples, making this algorithm, in most cases, impractical for really large datasets.

Choice of Kernel:

- Good performance in SVM depends much on the choice of the kernel function and its associated parameters. Choosing the right kernel, then, and tuning its parameter can only be indicative and requires domain knowledge and experimentation.

Memory Consumption:

- While support vector machines are memory efficient in terms of prediction time, the training time can get really memory-consuming, mostly for large datasets, since it involves storing and processing large matrices.

Computational Complexity:

- Training SVMs can become quite computationally intensive, especially for large datasets. In most cases, training complexity is either quadratic or cubic in the number of samples, making this algorithm, in most cases, impractical for really large datasets.

Choice of Kernel:

- Good performance in SVM depends much on the choice of the kernel function and its associated parameters. Choosing the right kernel, then, and tuning its parameter can only be indicative and requires domain knowledge and experimentation.

Memory Consumption:

- While support vector machines are memory efficient in terms of prediction time, the training time can get really memory-consuming, mostly for large datasets, since it involves storing and processing large matrices.

Comments

Post a Comment